Screenflow: an unfinished attempt at a cross-platform server-driven UI at Uber

Growing up, I loved reading the stories of small teams doing amazing things at Xerox PARC and Bell Labs, about the early days of UNIX and C. At best times people involved were not bound by strict deadlines and spent many days playing with ideas, trying new things. When doing software engineering for a large tech company, it is rare to have an opportunity to invent a whole new stack.

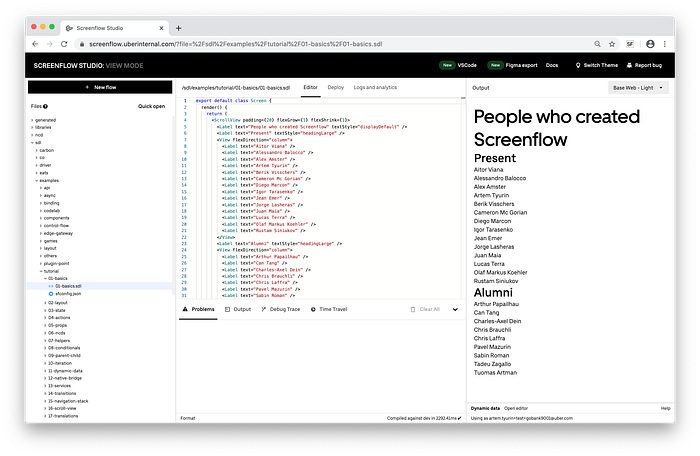

From October 2017 till July 2020 I was part of the team at Uber that had such an opportunity. The project was called Screenflow, most of our team was based in the Amsterdam office and at our largest, the team was 14 people.

The problem

Uber has many apps (Rider and driver apps, Uber Eats, Uber Freight) available on iOS, Android, and the web. Since most app features are available on all platforms, they need to be written 3 times and most often by different developers because of different languages and toolchains. That takes time and requires careful coordination. Another problem is the unpredictable wait times in the App Store once the update is submitted. In our case, one example of rapidly changing UI was payment forms. If we couldn’t quickly update the particular form with the new terms and agreements checkbox, then we couldn’t accept payments, and that is business-critical for an app like Uber.

One popular approach called server-driven UI is for the app to fetch some form of configuration from the server. We’ve started on a mission to consolidate all previous company attempts at a server-driven UI. Our goal was to deliver the “batteries included” framework for all developers in product teams at Uber. It had to be easy to extend the framework with native components and APIs that are team-specific. Because the UI definitions come from the server and could be updated at any point, we had to make it safe, secure, and predictable. And we also didn’t want to be limited by just producing the static UI, the screens had to be interactive.

This article is a recollection of technologies that we’ve built to achieve that goal.

Terminology

To refer to the UI produced in our framework we used the words “screen” or “flow”. This is the data that includes the UI definitions, business logic, and metadata. The word “screen” was not very accurate, since some features did not cover the whole screen, but were embedded in an existing native UI.

Every flow was addressed with a “flowId”, a string similar to a URL on the web.

Domain-specific language

At Uber, mobile code is done in Swift and Java, web code is written in JavaScript with React. We needed a common way to describe the UI and the associated business logic. Some similar internal projects used JSON, XML, YAML, etc. files to store that data. They all had a downside of the inability to conveniently embed code inside.

We’ve created our own domain-specific language based on a subset of TypeScript. We took many concepts from React, like immutable state and JSX. As in React, the UI was composed out of components that were connected by reactive “props” — data passed from parent components to children.

The source files had filename extension “.sdl”, which stood for “Screenflow Definition Language”.

In our DSL the only allowed top-level definitions were components, and only a subset of expressions was allowed in the render block and the state initialization. That introduced some limitations but made it easier to make performance guarantees.

Our language was compiled down to our own intermediate format (called SIR — Screenflow Intermediate Representation), which was used to transfer data to the client runtimes. Business logic (component methods) was compiled to JavaScript, the state and the layout parts were extracted as static data. That allowed us to render many basic screens without starting the JavaScript VM.

Our compiler injected additional type annotations on the AST (Abstract Syntax Tree) level and passed down the modified program to the TypeScript compiler for the final check. For example, the component state would be automatically marked as read-only to prevent direct mutations, and the return type of any action would be a partial of the state to ensure the state correctness after modifications. TypeScript configuration (tsconfig.json) was not exposed and the strict mode was enabled out of the box, usage of “any” type was not allowed. Given the good type inference ability of TS, very few type annotations had to be written by our users.

Our compiler also provided additional errors in case of incorrect TypeScript syntax, since many developers were coming from Swift and Java. We’ve invested in good error messages and that made onboarding easier.

Normal actions allowed only synchronous code to be used. To use networking or async native APIs async actions were introduced. They were implemented as async generators, where the “await” keyword is available and multiple states can be yielded.

Building on top of TypeScript allowed us to reuse an API for the AST traversal and the type checker.

As with any typed language, the external environment had to be typed as well. In our case, the external environment consisted of native UI components and native APIs. TypeScript uses definitions files (.d.ts) files to describe external types and they can contain only types. Because we needed a way to share not just types, but also default values (e.g. the default value for “enabled” prop on <Button /> is “true”) between all platforms, we couldn’t use .d.ts format as is, so we’ve used the usual TypeScript files that were linted accordingly.

Default values were extracted during the AST traversal and corresponding structs and classes were generated to be used in the native code. This was done to ensure correct integration with the native code. I’ll expand more on that further in the article.

The DSL did not support direct imports for the NPM packages as we wanted control over the bundle size, however, we constantly added requested components and APIs to the runtime.

Our compiler had some bundler features as well, for example, we’ve converted the images referenced as ECMAScript imports to base64 strings to be embedded in the output format.

Server

All screen definitions lived in a shared monorepo where product teams stored their screens. For every commit, the CI pipeline invoked a compiler and stored the compilation artifacts in a database.

Data hydration was implemented by marking various parts of the state as dynamic. On the server, it was possible to associate a particular flowId with an endpoint that provided additional data. For example, in a user profile form, you would’ve marked firstName and lastName state values as dynamic, and the runtime would automatically initialize those with the values provided from the user profile endpoint.

We had many talks and plans about doing server components that run on the server and can use the server state. In December 2020, React announced server components that had many similarities to our proposed designs.

Mobile and web runtimes

When a product team wanted to display a Screenflow screen, they would launch the Screenflow Mobile Runtime, passing the flowId as the parameter. Screenflow runtime then loads the associated flow (either from the local cache or from the server) and initializes the native state. When the user interacted with the UI, like pressing a button, the state was serialized and passed to JavaScript. The JS code produced a new version of the state which was serialized back to native. Then runtime did the necessary rerendering.

We’ve decided not to use the “virtual DOM” approach used by React and go with the simpler mechanism of live bindings. This was only possible because the DSL restricted the arbitrary control flow and function calls in the render section. By sacrificing some code flexibility compared to React, we didn’t have to pay the price of doing the diff and patch operations for UI trees.

The greatest challenge in implementing the cross-platform UI is unsurprisingly rendering and layout. One approach is to implement your own rendering pipeline (Flutter does this). It is quite portable but does not fully match the native look and feel of the particular platform. The important goal for us was to seamlessly integrate with the existing native UIs. That meant we needed to reuse all the existing native components and themes. And somehow this had to be exposed to the developers.

We went with the Flexbox layout, which is one of the available layout systems on the web. Rendering was done with YogaLayout on iOS and FlexboxLayout on Android. For web runtime, we’ve compiled directly to React code.

To execute JavaScript we used the built-in JavaScriptCore on iOS and Duktape on Android because of the small runtime size.

The most challenging part was to make sure that native components properly work with the Flexbox layout system. Async features like callbacks and promises were also not straightforward with Duktape.

We’ve encountered performance problems with the FlexboxLayout on Android and landed a few pull requests to fix those. We’ve also experimented with a newer and promising library Stretch for the layout but didn’t end up switching due to the lack of example code and memory leak issues (could’ve been caused by incorrect integration from our side).

IDE

At Uber most often iOS developers use Xcode, Android developers use IntelliJ IDEA or Android Studio, and web developers mostly use Visual Studio Code. We had an option to develop plugins for all IDEs, but it was hard to decide which one to do first. These days the Language Server Protocol is available to make it easier to support a new language, however, we wanted a tighter integration.

We were not satisfied with how slow things were when developing native app features. For most changes, the app had to be restarted. For our framework, we wanted live previews for all platforms.

It was also important to us to make the setup as frictionless as possible, everything had to work out of the box. This is why we’ve decided to bet on the browser-based IDE. An entire development cycle was done from the browser, you just type the URL and you have your environment ready. An IDE had access to the monorepo, so it was easy to browse existing files. Since the compiler was written in TypeScript, it was trivial to run inside the browser. Combined with the live web preview in the IDE it resulted in a very quick edit and run cycle. For the mobile preview, we’ve built companion apps that were connected to the web IDE.

To provide the usual git experience, we’ve built a CLI that proxied the local file system to the browser. That way, it was possible to clone the monorepo locally and use the local files in the browser-based IDE.

As with tools like Figma, browser-based experience allowed us to quickly share the progress between designers, developers, and product managers. We’ve also prototyped a tool that produces the UI in our language directly from Figma designs.

Our intern created a time-traveling debugger inside the IDE that was possible because all state updates were immutable.

Because some data could be provided only from the actual device, we’ve come up with preview components that supply mock data to components when previewing them in the IDE. After SwiftUI made its debut later, we saw that they arrived at the same solution.

We were expecting to drop the CLI as the Google Chrome team’s proposal to expose local file system native was (and still is) making good progress.

Later we’ve also built a Visual Studio Code plugin that connected to the browser preview window via WebSockets.

Type safety

With server-driven UI you get a very different cadence of releases for different parts. Screens can be updated at any time, but the native API is still a part of the usual app release cycle. It was important to verify that the screen is using only the APIs that are available on that particular version of the app. In our setup, the older runtime could fetch a recently updated screen. That meant that without proper safety, a call to a non-existent API will cause a runtime crash.

In Screenflow, all native components and APIs were defined in definition files (.d.sdl files). We’ve built the code generation tooling to produce Swift protocols and Java interfaces from .d.sdl files. Mobile runtimes used those to match the native implementation. That resulted in a type-safe bridge between JavaScript and native. This is similar to a codegen part of React Native’s Fabric/Turbo Modules architecture.

All definitions were versioned and every screen had to specify a version. When pushing screen changes to the monorepo, the CI invoked the compiler on all previous versions to ensure that the screen would run on all existing runtimes.

We also wanted safe networking. At Uber, all endpoints were described with Thrift at that time. We’ve written tooling that converted those endpoints to the typed TypeScript functions that invoked the network request. To use networking in Screenflow, you needed to import an endpoint method and just call it inside the async action.

Alternatives and inspiration

Why did we need to build our own solution? Let’s consider a few alternatives.

React Native was not chosen because we wanted a small runtime and at that time it didn’t fit our constraints. One of the first apps that used our framework was Uber Lite, which is an extremely lightweight version of the Uber app built for developing markets, and it had a total size constraint of 5 megabytes for the whole app.

We did not use Flutter or any of the webview based frameworks because we wanted to provide the same native components that are used across the native parts of the app.

Of course, we stood on the shoulders of giants and took a lot of inspiration from React and React Native, Expo, Airbnb’s Lona, Flutter, SwiftUI, Jetpack Compose. We’ve made a good prediction by betting on TypeScript in 2018, and in 2021 it is going stronger than ever.

Adoption

We were aiming for a zero-config out-of-the-box framework, so the product engineers could feel as productive as possible.

Many mobile developers were happy to work in an environment where they had live app previews and close to zero build times. It was easy to onboard even people with no previous experience in mobile and web. Some backend developers were able to build new screens just after 2 days.

By July 2020, at least 16 flows had been rolled out to production across Uber and Uber Eats apps. Those ranged from basic static screens to complex dynamic screens that spanned multiple pages, hydrated with backend data, reacting to user input, and making service calls to the backend.

Future predictions

Solutions like React Native and Flutter seem to work well when building apps from scratch. Integration with existing native apps is usually not so smooth. I think there is a niche for projects that are primarily focused on a side by side usage together with the native UI.

It seems that cross-platform layout is still one of the main challenges in this space. The React Native team did a good job of making Flexbox available cross-platform, however, in our experience, Flexbox itself is not the most straight-forward option for doing mobile layouts. Modern frameworks like SwiftUI and Jetpack Compose use simpler primitives (like HStack and VStack). This problem is hard because there are not many open standards for this and it probably requires creating a new one.

There is also demand for full-stack solutions, that handle not only the rendering part but also the delivery, data storage, editing experience, versioning, native API type safety, etc.

The end

In July 2020 the whole developer platform department in Amsterdam was unexpectedly shut down and our team was a part of it. Some people (including myself) left the company, others went to work in different teams. I don’t know what happened with the project after that.

If you have any questions about the article, feel free to message me at artem.tyurin@gmail.com.